Lynnette

AI tutoring, reimagined for accessibility and kindness

Overview

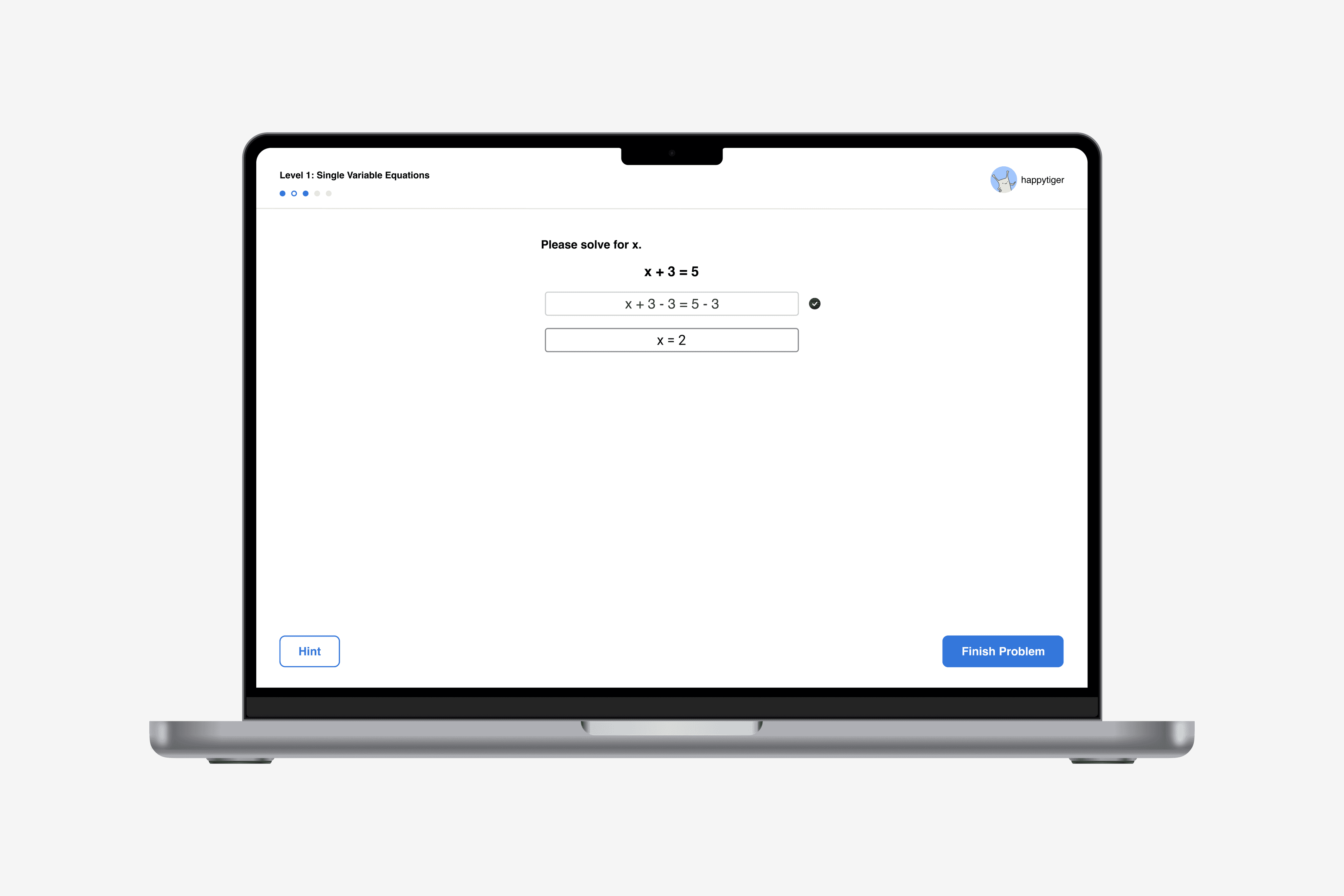

Lynnette is an AI-based tutoring system that provides middle school students with an interface for step-by-step mathematical problem-solving.

I spent a summer at the Carnegie Mellon Human-Computer Interaction Institute spearheading a data-driven system redesign, bringing design thinking to a product used to test learning theories. Beginning with a body of literature on Intelligent Tutoring Systems and many unfiltered data of Lynnette's student interactions, I identified key improvements, prototyped and developed solutions to issues I uncovered, and handed off design methods for researchers to replicate.

Role

UX Design and Research Intern

National Science Foundation Funded

Advised by

Tomohiro Nagashima

Timeline

10 Weeks

Tools

Figma, Miro, HTML/CSS/JS

Jump to Solution →

Lynnette

Problem Space

Design for learning

Dr. Aleven and his team at the HCII primarily use Lynnette to test learning theories in National Science Foundation-funded projects. Their prior research indicated Lynnette's effectiveness in student learning, but I was recruited to analyze and improve it from a design perspective. Data-driven redesigns, while common in product design, are rare but growing in research literature. We identified the following research question to guide our process:

As a product designer working at a research institution, I aimed to not only improve Lynnette as a product, but also contribute to the advancement of child-computer interaction.

Research

What's an ITS? & Other groundwork

Lynnette—before

Literature Review

To understand the context and constraints of the effort, I surveyed literature on educational technology, and more specifically, Intelligent Tutoring Systems (ITS). I learned that an ITS provides feedback and hints to support step-by-step problem-solving. Lynnette satisfied all three criteria.

ITS are structured this way because research shows that providing reasoning for solved steps deepens conceptual understanding of procedures. Middle-school students often struggle with understanding the principles behind algebraic equations (e.g. memorizing steps rather than learning why they are applied). Thus, cognitive tutors like Lynnette support learning by doing, monitoring learning through performance. Reading about data interpretation gave me a starting point for unpacking problem areas.

DataShop Exploration

The lab maintains a data analysis tool called DataShop that I utilized to visualize datasets recorded from past student interactions with Lynnette. I supplemented all of my DataShop findings by observing the steps students had taken, via a step-by-step replay analysis tool created by Max, a developer at the HCII.

Cross-referencing findings from my various data analysis methods, I uncovered many of Lynnette’s challenging use cases. I began hypothesizing reasons for these difficulties (i.e. specific usability issues). For each hypothesis, I came up with a few questions on 1) the underlying reasons for the hypothesis and 2) how we might solve these problems. I also began noting corresponding improvement suggestions early in the process.

I believed that casting a wide net and having a backlog of Lynnette usability issues would allow future designers and developers to continue to develop the product while I handed off design processes.

UX Research

The findings from my data analysis pointed to issues with Lynnette's design. Thus, I proposed a cognitive walkthrough, heuristic analysis, and competitor analysis that would allow us to evaluate the usability of the system against industry standards to identify pain points, areas of improvement, and opportunities. I chose these systematic methods because they could provide broad UX insights rather quickly and did not require additional resources. As the designer on the team, I volunteered to conduct these sessions with anyone interested in joining.

Defining the Problem

Narrowing scope, being realistic

At this point, I had a running list of Lynnette issues. I felt it was crucial to conduct user interviews to understand students’ mental models and why the data was showing these insights.

We were unfortunately not able to speak with the middle-school students during their summer break, so we used a proxy: a teacher who led multiple classroom studies. Using this proxy also allowed me to co-generate improvement ideas with an educator's perspective. I aimed to answer the following questions:

In this session, the teacher discussed and categorized issues according to priority (high-low). Using a closed card sort to guide the discussion, I focused my questions on student goals and how a teacher would support students in each scenario.

Developing a Prioritization Framework

Snapshot of Issue List

I had grown the aforementioned list of issues to a meticulous document in which I aimed to (1) collect issues; (2) present the issues as design goals; (3) prioritize the goals; (4) rewrite the goals as questions to be designed for.

Figma time. I was so excited by the patterns I found that I wanted to design from scratch. However, my proposal was met with feedback to prioritize, as I would not have time to finish redesigning from the ground up. I had to take a step back and ask myself,

The purpose of my redesign was to help maximize learning, which could be measured by analyzing the learning data collected on DataShop, with smoother learning curves indicating better learning. I decided to prioritize the issues to tackle based on four criteria. (1) Issue severity; (2) Teacher input; (3) Technological complexity; (4) Greatest impact.

Design Changes

Priorities 😤

Lynnette's previous UI was challenging for students with visual impairments and learning disorders. Smaller screens, such as mobile and tablet, exacerbated this. To improve its accessibility, I made changes to Lynnette's colors and typography that were low-lift, engineering-wise. I removed the color blocks to show the connection between the problem and subsequent solving steps.

Lynnette collected an X for every failed attempt at each step, culminating often in an entire row of Xs. To cultivate a kinder system—reward learning rather than punish attempts—I implemented feedback based only on one instance of each step.

I implemented a single-line type-in, nudging students to consider variables on both sides and homogenizing the problem and input fields. This better aligns with the psychological compulsion observed in students typing input from the left.

Students pressed the finish problem CTA prematurely when it was appended beneath an input field. Some also continued to input after pressing the CTA, indicating it was not clear when a problem was successfully solved. I tackled these issues by placing the finish problem CTA in a static location with dynamic text indicating success.

Hint iterations

Two pain points I discovered were that (1) students familiar with the system sometimes “gamed" it using hints; (2) students newer to Lynnette mistakenly pressed the hint button a few times before noticing the "next" CTA within the dialog. I scaffolded the hints to design for these pain points and used a single CTA with a message prompting students to click for hints as needed. I also disabled the CTA and removed the message at the end of the sequence.

There were originally two hint buttons—one under each side of the type-in fields—that triggered the same dialog. Consolidating the hints removes ambiguity. Placing them in a static location encourages students first to try problem-solving on their own with the understanding that hints are always accessible.

Let's return to our goals.

Prototype

Final Demo

Impact

Design to maximize learning 🧠

From changing the look and feel to breaking down mental models behind user flows, I demonstrated to the team that design matters. My redesigned version of Lynnette is now used in classrooms by researchers testing various learning theories. It has helped improve student learning—as shown through smoother learning curves—and received positive feedback from students and teachers.